- Joined

- Jul 27, 2004

- Messages

- 2,762

https://www.techpowerup.com/348825/...oads-4-gb-ai-model-on-your-pc-without-consent

'Don't be evil'

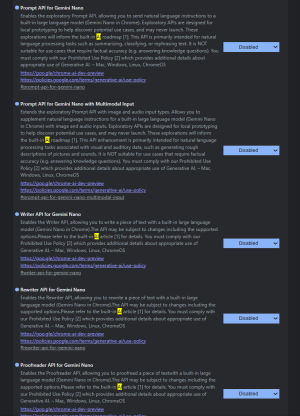

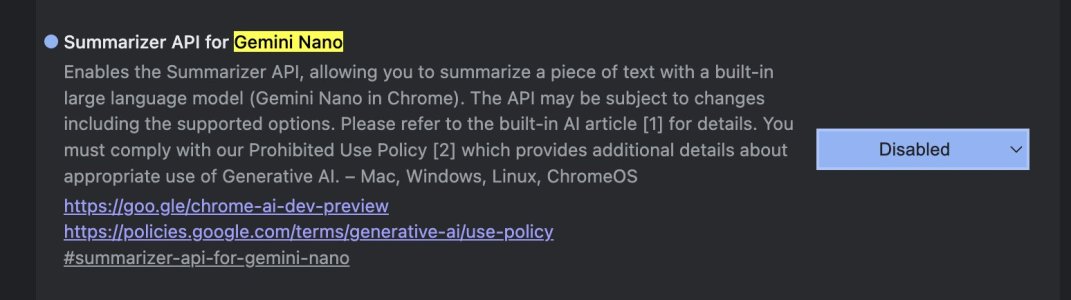

Google Chrome is reportedly downloading a 4 GB AI model onto user PCs without consent, prior information, or any way for less technical users to discover it independently. According to Alexander Hanff, who publishes a blog called "That Privacy Guy," Google Chrome is installing a 4 GB Gemini Nano model locally without user consent. The researcher discovered that Google Chrome downloads and installs the local AI model automatically, without any user input. Google Chrome initiates this process by creating an "OptGuideOnDeviceModel" folder, which contains a "weights.bin" file that is exactly 4 GB. This file is used for Google's Gemini Nano model, which handles on-device scam detection, AI-assisted writing, and other tasks. The entire process takes about 15 minutes to complete, all without the user's knowledge.

'Don't be evil'

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)