- Joined

- Nov 16, 2005

- Messages

- 20,822

It had so much potential. Pissing away 10 million per episode was impressive though.That show was a crime against humanity!

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

It had so much potential. Pissing away 10 million per episode was impressive though.That show was a crime against humanity!

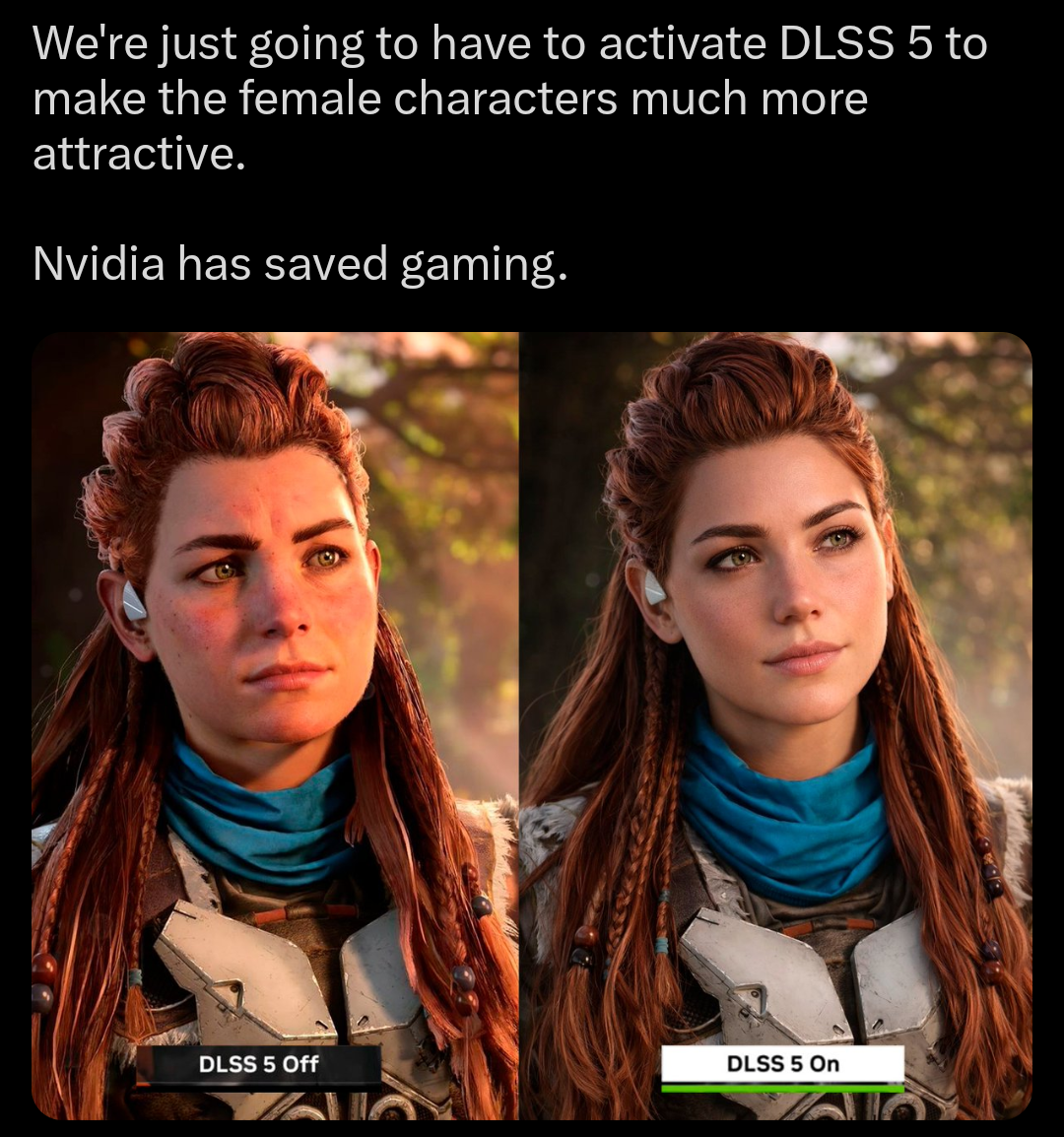

I just realized their AI "Ana de Armas'd" Grace. It's a goon filter in its current form.

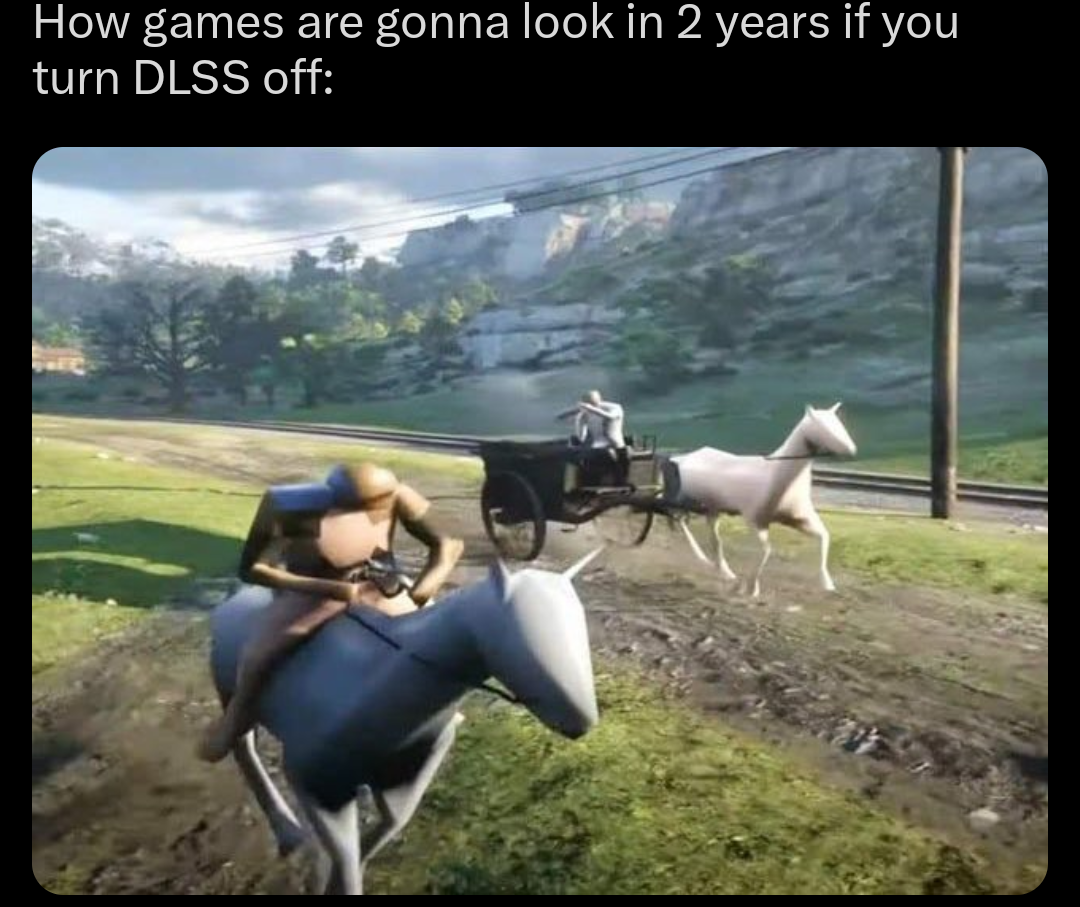

I want to see what the artist originally intended, not what some computer thinks looks better.I don't get the push back. Graphics have been stagnant for over 10 years. We keep throwing more and more TFLOPS at it for minor upgrades.

Nvidia creates an algorithm that makes meh materials realistic and implements realistic lighting and everyone is clutching their pearls. Neural rendering may end up as impactful as shaders and bump mapping were.

I honestly don't care what it does or how it does it. I want the game to look better.

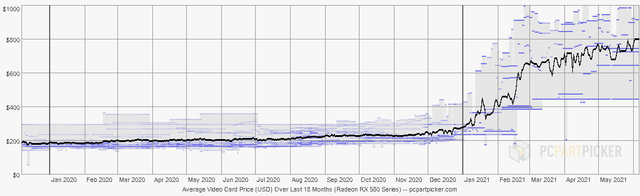

I suspect the push back is due to AI causing memory and gpu prices to rise. I didn't know we were huge on respecting the art direction from AAA studios now days.

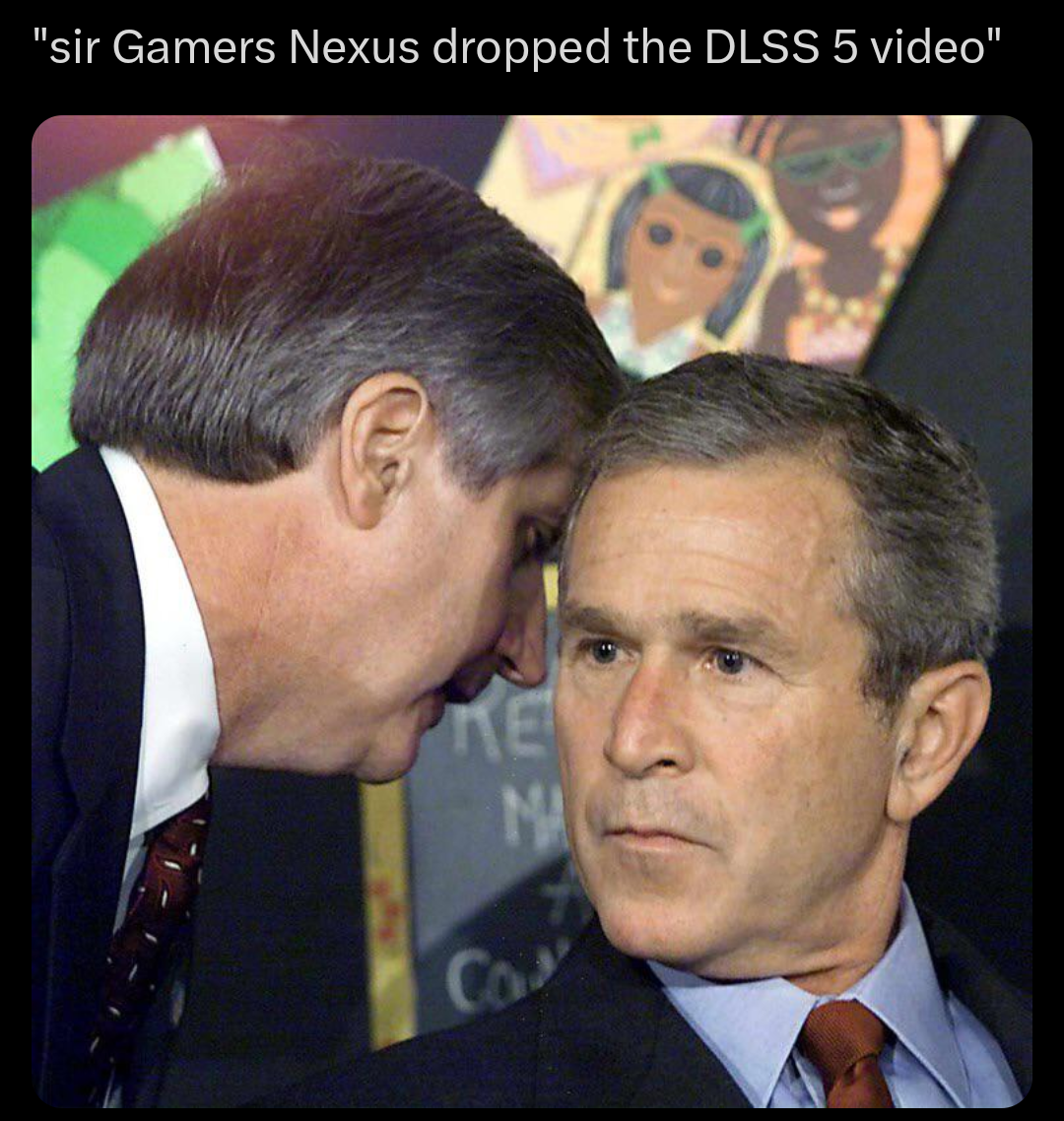

Here's a video of it.Without seeing how motion blur of the dlss off look like, seem a bit hard to say.

The few artists who will be left will intend whatever the computer thinks looks better.I want to see what the artist originally intended, not what some computer thinks looks better.

But this is directly controlled by the artists. Also, some of the most popular mods for games are reshades, models and texture enhancements.I want to see what the artist originally intended, not what some computer thinks looks better.

DLSS5 is going to be even worse because it's a compounding problem with multiplicative errors in magnitude. First you have upscaling which has its own issues and errors then you add in framegen which adds in more issues and errors and then you top that with the new DLSS5 stuff which causes more issues and errors while compounding all the issues and errors from the previous stuff done to the images. It ends up being multiplicative compounding errors.I agree. I am not 100% against AI in assisting development. I am not against it helping make place holders, or helping clean up in some type of asset prior to review and adjustment. But DLSS 5 seems to be doing exactly what we don't want to see. It is essentially creating the end product without final adjustments from the artists. More or less allowing more sloppy work with what is essentially a crappy filter thrown on top of it. And like all upscaling and whatnot, there will be some downside. And we really don't need more of that on individual assets.

You can't add specific prompts like that to a model that runs on a rendered image because there will be different things visible on each frame. Well, technically you could by running a visual AI model on the frame to determine what is on the picture, but I doubt that can be made feasible in a real time application.this will be coming fast right now the semantic you add is only stuff like skin, furh, hair, satin, plastic, foliage, water (just 256 different right now)..

I think the best case is them allowing devs to use their own LoRAs.If they do offer a way to give hints to the model like this devs would have to commit more to Nvidia proprietary tech, since that's not just drag and drop. Granted, there was that figure that the vast majority of the market is Nvidia anyway but does make me think of PhysX.

They are trying to piss on you and claim its raining, nothing new. It's clearly an AI filter that they are trying to claim is something more than that.That may be. To be honest. Nvidia has said multiple conflicting things about this tech. Its lighting, its not a filter, it is a filter, It enhances geometry, it leaves models alone. I don't think they were actually prepared for any backlash. They seem pretty out of touch. Maybe when Jensen said 5 enhances geometry he is just wrong and no one there wants to correct him? I don't know lol

That's certainly...a take.What ever DLSSSSS-5+ extra did made the game graphics look tons better.

1. They're not realistic, they are overprocessed. This is dynamic/showroom setting on TVs.For years everyone complained about not getting realistic graphics, especially after paying 3K+ for a graphics card. Then they show that you can and everyone starts crying about it.

I must have been in another time line the past 30 years. For me every generation has been about the march towards photo realism. With obvious exclusions like cell shaded and pixel art games.2. Photorealism is not and has never been the goal of gaming graphics. Of some games, yes, and is a valid goal as an available tool. Butchering of existing game visual design, both in geometry and rendering, ain't it.

What is the most successful gaming platform?I must have been in another time line the past 30 years. For me every generation has been about the march towards photo realism. With obvious exclusions like cell shaded and pixel art games.

For years everyone complained about not getting realistic graphics, especially after paying 3K+ for a graphics card. Then they show that you can and everyone starts crying about it.

Real time vibe code rendering... seems like a nightmare.The plan to replace GPU's with simple AI accelerators and software (to increase profit) is well underway at Team Green!

Eventually game engines won't even be needed anymore. Games will be coded with just descriptors and the AI accelerator and software will take care of the rest of the rendering.

Yaass, slay!People are putting to much emotion into their comments, its the same people that screamed you must not buy Hogwarts Legacy. Its the same people reacting the same way about every thing they FEEL is bad. Then don't use DLSS, don't buy Nvidia, don't watch a certain channel or podcast but instead we must cancel everything all the time.

If a game came out with the same graphics without DLSS on everyone would be blown away by the amazing graphics, mind blowing, next gen............

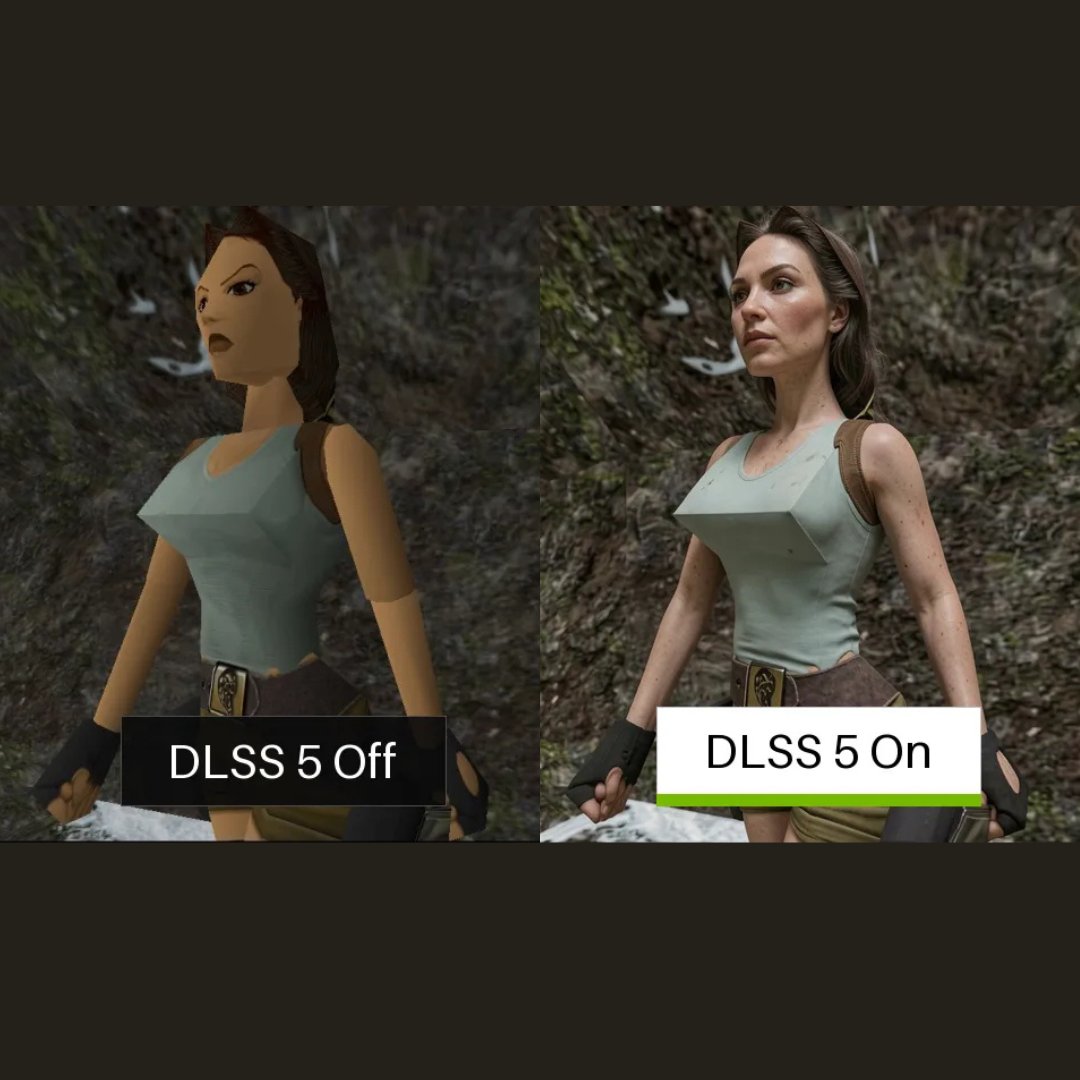

to me it is night and day comparison, The image on the right is 10000000x better then the original. So basically "don't believe your lying eyes"

View attachment 792342

84% dislike ratio don’t liePeople are putting to much emotion into their comments, its the same people that screamed you must not buy Hogwarts Legacy. Its the same people reacting the same way about every thing they FEEL is bad. Then don't use DLSS, don't buy Nvidia, don't watch a certain channel or podcast but instead we must cancel everything all the time.

If a game came out with the same graphics without DLSS on everyone would be blown away by the amazing graphics, mind blowing, next gen............

to me it is night and day comparison, The image on the right is 10000000x better then the original. So basically "don't believe your lying eyes"

View attachment 792342

People are putting to much emotion into their comments, its the same people that screamed you must not buy Hogwarts Legacy. Its the same people reacting the same way about every thing they FEEL is bad. Then don't use DLSS, don't buy Nvidia, don't watch a certain channel or podcast but instead we must cancel everything all the time.

If a game came out with the same graphics without DLSS on everyone would be blown away by the amazing graphics, mind blowing, next gen............

to me it is night and day comparison, The image on the right is 10000000x better then the original. So basically "don't believe your lying eyes"

View attachment 792342

agreed.People are putting to much emotion into their comments, its the same people that screamed you must not buy Hogwarts Legacy. Its the same people reacting the same way about every thing they FEEL is bad. Then don't use DLSS, don't buy Nvidia, don't watch a certain channel or podcast but instead we must cancel everything all the time.

If a game came out with the same graphics without DLSS on everyone would be blown away by the amazing graphics, mind blowing, next gen............

to me it is night and day comparison, The image on the right is 10000000x better then the original. So basically "don't believe your lying eyes"

View attachment 792342

People are putting to much emotion into their comments, its the same people that screamed you must not buy Hogwarts Legacy. Its the same people reacting the same way about every thing they FEEL is bad. Then don't use DLSS, don't buy Nvidia, don't watch a certain channel or podcast but instead we must cancel everything all the time.

If a game came out with the same graphics without DLSS on everyone would be blown away by the amazing graphics, mind blowing, next gen............

to me it is night and day comparison, The image on the right is 10000000x better then the original. So basically "don't believe your lying eyes"

View attachment 792342

I'm not technically up on all the shading and lighting stuff. But I'll say that most of the images I've seen are brighter than the comparison shots. Is the detail more apparent on the right? Yes. But is also changed the model itself so what is it really doing and is it always going to produce good changes in the model every time? Why are a lot of the shadows gone? The background on the image on the right is much brighter than the original. Same with the Assassin's Creed images earlier a lot of the shadows were gone.People are putting to much emotion into their comments, its the same people that screamed you must not buy Hogwarts Legacy. Its the same people reacting the same way about every thing they FEEL is bad. Then don't use DLSS, don't buy Nvidia, don't watch a certain channel or podcast but instead we must cancel everything all the time.

If a game came out with the same graphics without DLSS on everyone would be blown away by the amazing graphics, mind blowing, next gen............

to me it is night and day comparison, The image on the right is 10000000x better then the original. So basically "don't believe your lying eyes"

Nvidia will not want to do it, because they will make something that have not only temporal stability, but exact run to run, machine to machine look, but other could do different and you could make system that are deterministic with that level of semantic, a lot of randomness we see in models is something that is injected by design to not get stuck.You can't add specific prompts like that to a model that runs on a rendered image because there will be different things visible on each frame. Well, technically you could by running a visual AI model on the frame to determine what is on the picture, but I doubt that can be made feasible in a real time application.

We do not see what the non dlss in slow-mo look like in that video (with motion blur i pressume, that a franchise that has an history of complains)Here's a video of it.

Plastic surgeons and Instagram filters love you.It's new and will get better over time. Just like all the advancements in GPU technology and drivers have always been. The closer to photo realism the happier I am. Cartoony games can stay cartoony if they like but games that feature real people should look like real people and objects. This is getting us there.

It is using the nvidia streamline framework to talk to the dlss 5 implementation, but those semantic are just a simple int from simple material string value, passing them to a different model would be easy (the unreal, blender, etc... asset that describe them is not proprietary), a bit like motion vectors are not a big deal to pass to any upscaler once you have them.If they do offer a way to give hints to the model like this devs would have to commit more to Nvidia proprietary tech, since that's not just drag and drop. Granted, there was that figure that the vast majority of the market is Nvidia anyway but does make me think of PhysX.

I'm sure it will.It's new and will get better over time.

If the masses wake up from the constant... push of crap. So we can bank roll in development tech that would be cool.I do love you guys but sometimes it's like talking to a brick wall.